AI21-Jamba-Large-1.6

AI21 Jamba Large 1.6 is a powerful hybrid SSM Transformer architecture foundation model that excels in long text processing and efficient inference.

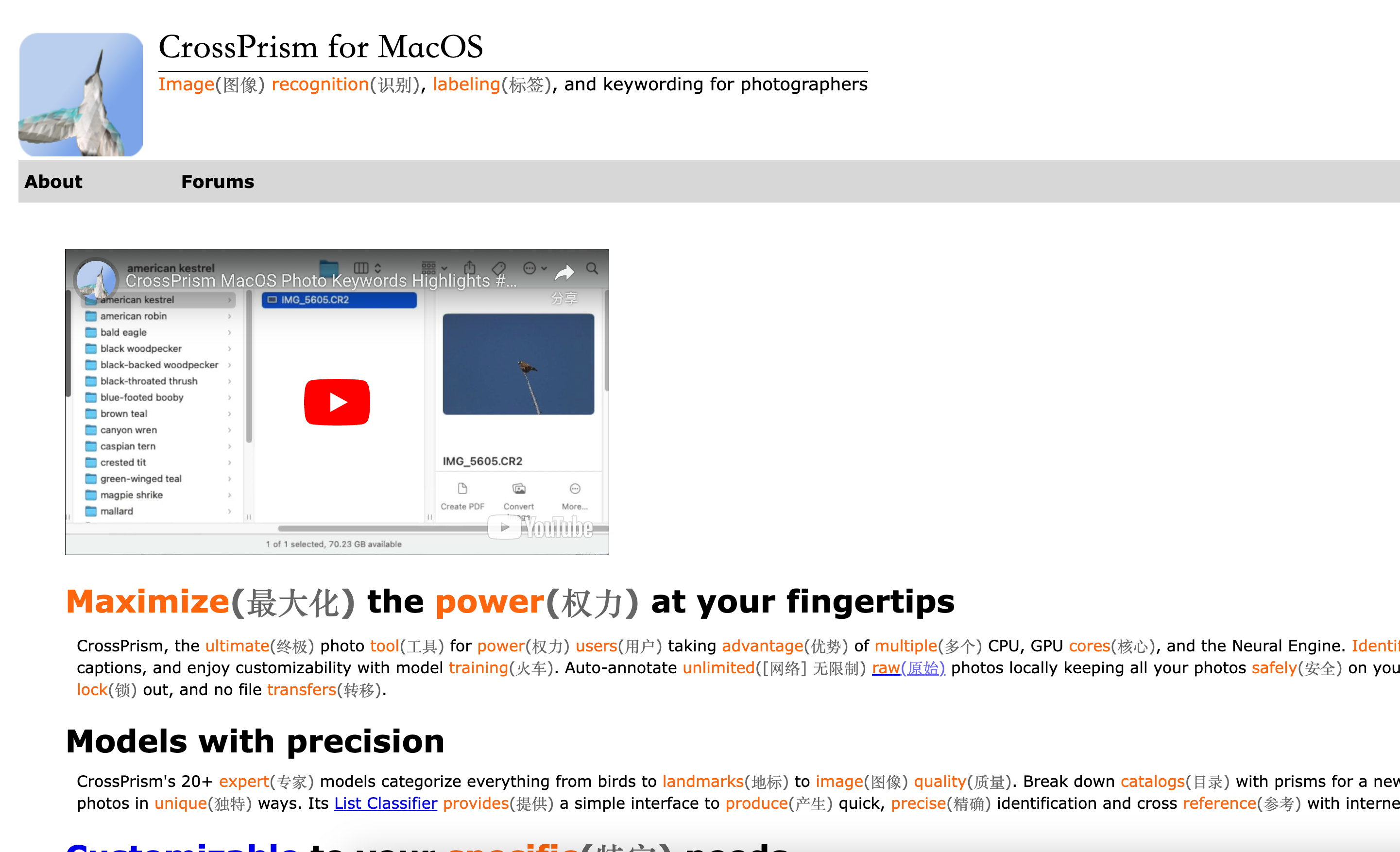

0

- Supports long text processing (context length up to 256K), suitable for handling long documents and complex tasks

- Fast inference speed, 2.5 times faster than similar models, significantly improving efficiency

- Supports multiple languages, including English, Spanish, French, etc., suitable for multilingual application scenarios

- Capable of following instructions and generating high-quality text based on user commands

- Support tool calling, can be combined with external tools to expand model functionality

Product Details

AI21-Jamba-Lage-1.6 is a hybrid SSM-Transformer architecture foundational model developed by AI21 Labs, specifically designed for long text processing and efficient inference. This model performs well in long text processing, inference speed, and quality, supports multiple languages, and has strong instruction following capabilities. It is suitable for enterprise level applications that require processing large amounts of textual data, such as financial analysis, content generation, etc. This model is licensed under the Jamba Open Model License, which allows for research and commercial use under the license terms.